LuxDiT

This is the luxdit_base checkpoint for LuxDiT: Lighting Estimation with Video Diffusion Transformer. It is finetuned on image data and includes a LoRA adapter for real scenes.

Model description

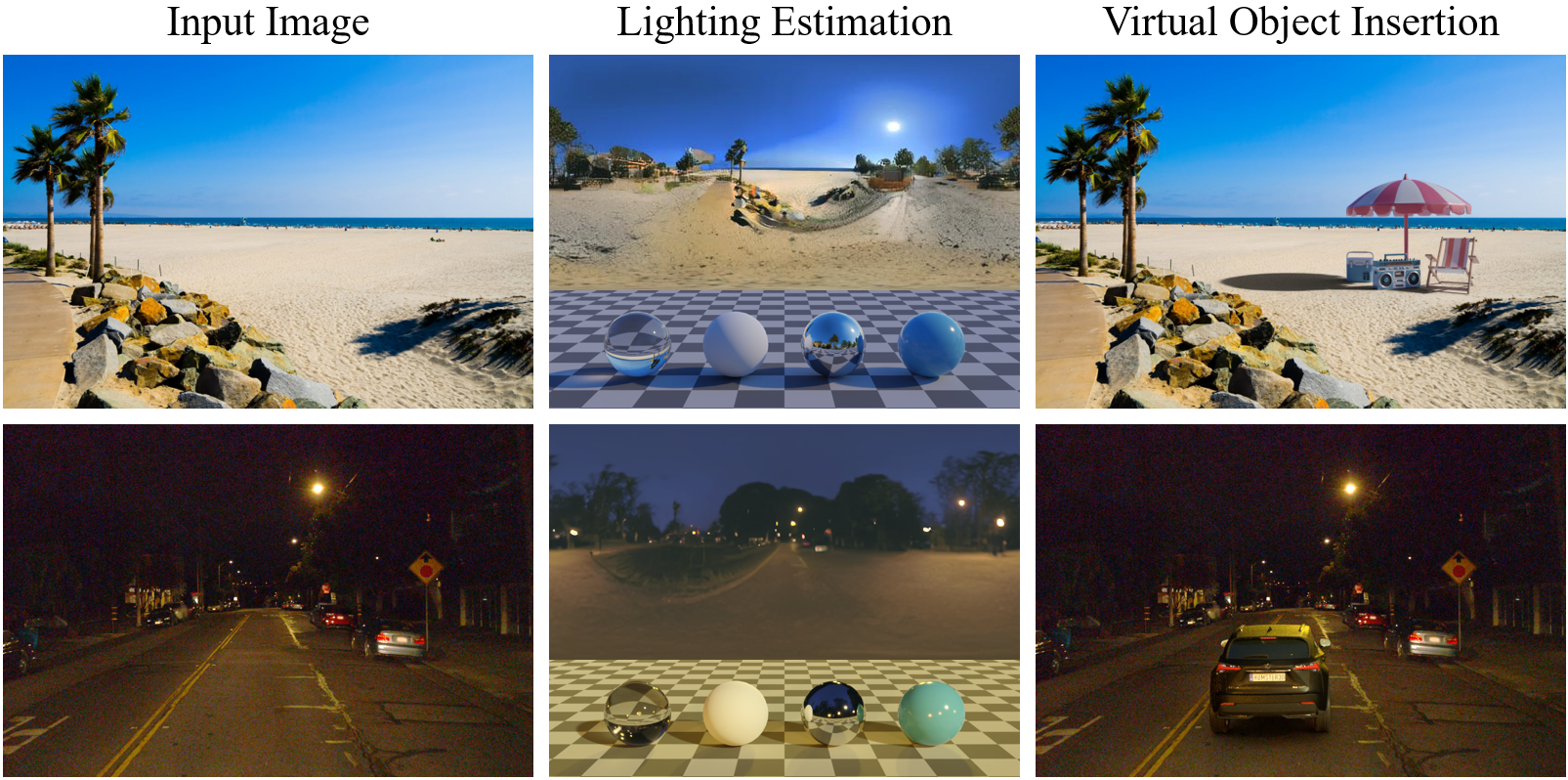

LuxDiT is a generative lighting estimation model that predicts high-quality HDR environment maps from visual input. It produces accurate lighting while preserving scene semantics, enabling realistic virtual object insertion under diverse lighting conditions. This model is ready for non-commercial use.

- Checkpoint: Base model (image-finetuned)

- LoRA: Included for real-scene generalization

- Paper: LuxDiT: Lighting Estimation with Video Diffusion Transformer

- Project page: https://research.nvidia.com/labs/toronto-ai/LuxDiT/

Use case: LuxDiT supports studies and prototyping in video lighting estimation. This release is an open-source implementation of our research paper, intended for AI research, development, and benchmarking for lighting estimation research.

Model architecture

- Architecture type: Transformer (based on CogVideoX)

- Parameters: 5B

- Input: RGB image frames; shape

[batch_size, num_frames, height, width, 3]; recommended resolution 480×720 - Output: RGB image frames (dual tonemapped LDR and log); output resolution 256×512; use the HDR merger to obtain

.exrHDR envmaps

Software and hardware

- Runtime: Python and PyTorch

- Supported hardware: NVIDIA Ampere (e.g. A100 GPUs)

- Operating system: Linux

How to use

Download from Hugging Face

From the LuxDiT repository root:

python download_weights.py --repo_id <HF_ORG>/luxdit_base

This saves the checkpoint to checkpoints/luxdit_base by default. Use --local_dir to override.

Inference: synthetic images (in-domain)

DIT_PATH=checkpoints/luxdit_base

INPUT_DIR=examples/input_demo/synthetic_images

OUTPUT_DIR=test_output/synthetic_images

python inference_luxdit.py \

--config configs/luxdit_base.yaml \

--transformer_path $DIT_PATH \

--input_dir $INPUT_DIR \

--output_dir $OUTPUT_DIR \

--resolution 480 720 \

--guidance_scale 2.5 \

--num_inference_steps 50 \

--seed 33

python hdr_merger.py \

--model_path checkpoints/hdr_merge_mlp \

--input_dir $OUTPUT_DIR/ldr_log \

--output_dir $OUTPUT_DIR/hdr

Inference: real scenes (with LoRA)

Use the LoRA adapter in this checkpoint for better generalization to real photos:

DIT_PATH=checkpoints/luxdit_base

LORA_PATH=checkpoints/luxdit_base/lora

INPUT_DIR=examples/input_demo/scene_images

OUTPUT_DIR=test_output/scene_images

python inference_luxdit.py \

--config configs/luxdit_base.yaml \

--transformer_path $DIT_PATH \

--lora_dir $LORA_PATH \

--lora_scale 0.8 \

--input_dir $INPUT_DIR \

--output_dir $OUTPUT_DIR \

--resolution 480 720 \

--guidance_scale 2.5 \

--num_inference_steps 50 \

--seed 33

python hdr_merger.py \

--input_dir $OUTPUT_DIR/ldr_log \

--output_dir $OUTPUT_DIR/hdr

Adjust lora_scale (e.g. 0.0–1.0) to control how much the input scene is merged into the estimated envmap.

Related checkpoints

| Checkpoint | Description |

|---|---|

| luxdit_base (this model) | Image-finetuned, with LoRA for real scenes |

| luxdit_video | Video-finetuned, with LoRA for real scenes |

For video inputs and object scans, use luxdit_video instead.

Training data

The base model is trained on the SyntheticScenes dataset:

- Modality: Video

- Scale: ~108,000 rendered videos; each video has 57 frames at 704×1280 resolution

- Collection: Synthetic data generated with an OptiX-based physically based path tracer

- Labels: Produced by the renderer (no manual labeling)

- Per sample: Input RGB (LDR) video and HDR environment lighting

Testing and evaluation use held-out 10% splits of the same dataset.

Output format

The model outputs dual tonemapped environment maps (LDR and log); use the HDR merger to get .exr HDR envmaps. By default, the camera pose of the input image defines the world frame. See the main README for the exact layout of LDR/log vs merged HDR.

Ethical considerations

NVIDIA believes Trustworthy AI is a shared responsibility. When using this model in accordance with the terms of service, ensure it meets requirements for your use case and addresses potential misuse. You are responsible for having proper rights and permissions for all input image and video content; if content includes people, personal health information, or intellectual property, generated outputs will not blur or preserve proportions of subjects. Users are responsible for model inputs and outputs and for implementing appropriate guardrails and safety mechanisms before deployment. To report model quality, risk, security vulnerabilities, or other concerns, see NVIDIA AI Concerns.

License

NVIDIA OneWay Noncommercial License. See the LICENSE in the LuxDiT repository.

Citation

@article{liang2025luxdit,

title={Luxdit: Lighting estimation with video diffusion transformer},

author={Liang, Ruofan and He, Kai and Gojcic, Zan and Gilitschenski, Igor and Fidler, Sanja and Vijaykumar, Nandita and Wang, Zian},

journal={arXiv preprint arXiv:2509.03680},

year={2025}

}

- Downloads last month

- 15