url stringlengths 58 61 | repository_url stringclasses 1 value | labels_url stringlengths 72 75 | comments_url stringlengths 67 70 | events_url stringlengths 65 68 | html_url stringlengths 46 51 | id int64 599M 1.12B | node_id stringlengths 18 32 | number int64 1 3.68k | title stringlengths 1 276 | user dict | labels list | state stringclasses 2 values | locked bool 1 class | assignee dict | assignees list | milestone null | comments sequence | created_at int64 1.59k 1,644B | updated_at int64 1.59k 1,694B | closed_at int64 1.59k 1,690B ⌀ | author_association stringclasses 3 values | active_lock_reason null | draft bool 2 classes | pull_request dict | body stringlengths 0 228k ⌀ | reactions dict | timeline_url stringlengths 67 70 | performed_via_github_app null | state_reason stringclasses 2 values | is_pull_request bool 2 classes |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

https://api.github.com/repos/huggingface/datasets/issues/3678 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3678/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3678/comments | https://api.github.com/repos/huggingface/datasets/issues/3678/events | https://github.com/huggingface/datasets/pull/3678 | 1,123,402,426 | PR_kwDODunzps4yCt91 | 3,678 | Add code example in wikipedia card | {

"login": "lhoestq",

"id": 42851186,

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/lhoestq",

"html_url": "https://github.com/lhoestq",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"starred_url": "https://api.github.com/users/lhoestq/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lhoestq/subscriptions",

"organizations_url": "https://api.github.com/users/lhoestq/orgs",

"repos_url": "https://api.github.com/users/lhoestq/repos",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"received_events_url": "https://api.github.com/users/lhoestq/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [] | 1,643,911,742,000 | 1,645,434,896,000 | 1,643,980,899,000 | MEMBER | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3678",

"html_url": "https://github.com/huggingface/datasets/pull/3678",

"diff_url": "https://github.com/huggingface/datasets/pull/3678.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3678.patch",

"merged_at": 1643980899000

} | Close #3292. | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3678/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3678/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/3677 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3677/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3677/comments | https://api.github.com/repos/huggingface/datasets/issues/3677/events | https://github.com/huggingface/datasets/issues/3677 | 1,123,192,866 | I_kwDODunzps5C8pAi | 3,677 | Discovery cannot be streamed anymore | {

"login": "severo",

"id": 1676121,

"node_id": "MDQ6VXNlcjE2NzYxMjE=",

"avatar_url": "https://avatars.githubusercontent.com/u/1676121?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/severo",

"html_url": "https://github.com/severo",

"followers_url": "https://api.github.com/users/severo/followers",

"following_url": "https://api.github.com/users/severo/following{/other_user}",

"gists_url": "https://api.github.com/users/severo/gists{/gist_id}",

"starred_url": "https://api.github.com/users/severo/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/severo/subscriptions",

"organizations_url": "https://api.github.com/users/severo/orgs",

"repos_url": "https://api.github.com/users/severo/repos",

"events_url": "https://api.github.com/users/severo/events{/privacy}",

"received_events_url": "https://api.github.com/users/severo/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 1935892857,

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug",

"name": "bug",

"color": "d73a4a",

"default": true,

"description": "Something isn't working"

}

] | closed | false | {

"login": "albertvillanova",

"id": 8515462,

"node_id": "MDQ6VXNlcjg1MTU0NjI=",

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/albertvillanova",

"html_url": "https://github.com/albertvillanova",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/other_user}",

"gists_url": "https://api.github.com/users/albertvillanova/gists{/gist_id}",

"starred_url": "https://api.github.com/users/albertvillanova/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/albertvillanova/subscriptions",

"organizations_url": "https://api.github.com/users/albertvillanova/orgs",

"repos_url": "https://api.github.com/users/albertvillanova/repos",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"received_events_url": "https://api.github.com/users/albertvillanova/received_events",

"type": "User",

"site_admin": false

} | [

{

"login": "albertvillanova",

"id": 8515462,

"node_id": "MDQ6VXNlcjg1MTU0NjI=",

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/albertvillanova",

"html_url": "https://github.com/albertvillanova",

"followers_... | null | [

"Seems like a regression from https://github.com/huggingface/datasets/pull/2843\r\n\r\nOr maybe it's an issue with the hosting. I don't think so, though, because https://www.dropbox.com/s/aox84z90nyyuikz/discovery.zip seems to work as expected\r\n\r\n",

"Hi @severo, thanks for reporting.\r\n\r\nSome servers do no... | 1,643,900,523,000 | 1,644,511,884,000 | 1,644,511,884,000 | CONTRIBUTOR | null | null | null | ## Describe the bug

A clear and concise description of what the bug is.

## Steps to reproduce the bug

```python

from datasets import load_dataset

iterable_dataset = load_dataset("discovery", name="discovery", split="train", streaming=True)

list(iterable_dataset.take(1))

```

## Expected results

The first row of the train split.

## Actual results

```

Traceback (most recent call last):

File "<stdin>", line 1, in <module>

File "/home/slesage/hf/datasets-preview-backend/.venv/lib/python3.9/site-packages/datasets/iterable_dataset.py", line 365, in __iter__

for key, example in self._iter():

File "/home/slesage/hf/datasets-preview-backend/.venv/lib/python3.9/site-packages/datasets/iterable_dataset.py", line 362, in _iter

yield from ex_iterable

File "/home/slesage/hf/datasets-preview-backend/.venv/lib/python3.9/site-packages/datasets/iterable_dataset.py", line 272, in __iter__

yield from islice(self.ex_iterable, self.n)

File "/home/slesage/hf/datasets-preview-backend/.venv/lib/python3.9/site-packages/datasets/iterable_dataset.py", line 79, in __iter__

yield from self.generate_examples_fn(**self.kwargs)

File "/home/slesage/.cache/huggingface/modules/datasets_modules/datasets/discovery/542fab7a9ddc1d9726160355f7baa06a1ccc44c40bc8e12c09e9bc743aca43a2/discovery.py", line 333, in _generate_examples

with open(data_file, encoding="utf8") as f:

File "/home/slesage/hf/datasets-preview-backend/.venv/lib/python3.9/site-packages/datasets/streaming.py", line 64, in wrapper

return function(*args, use_auth_token=use_auth_token, **kwargs)

File "/home/slesage/hf/datasets-preview-backend/.venv/lib/python3.9/site-packages/datasets/utils/streaming_download_manager.py", line 369, in xopen

file_obj = fsspec.open(file, mode=mode, *args, **kwargs).open()

File "/home/slesage/hf/datasets-preview-backend/.venv/lib/python3.9/site-packages/fsspec/core.py", line 456, in open

return open_files(

File "/home/slesage/hf/datasets-preview-backend/.venv/lib/python3.9/site-packages/fsspec/core.py", line 288, in open_files

fs, fs_token, paths = get_fs_token_paths(

File "/home/slesage/hf/datasets-preview-backend/.venv/lib/python3.9/site-packages/fsspec/core.py", line 611, in get_fs_token_paths

fs = filesystem(protocol, **inkwargs)

File "/home/slesage/hf/datasets-preview-backend/.venv/lib/python3.9/site-packages/fsspec/registry.py", line 253, in filesystem

return cls(**storage_options)

File "/home/slesage/hf/datasets-preview-backend/.venv/lib/python3.9/site-packages/fsspec/spec.py", line 68, in __call__

obj = super().__call__(*args, **kwargs)

File "/home/slesage/hf/datasets-preview-backend/.venv/lib/python3.9/site-packages/fsspec/implementations/zip.py", line 57, in __init__

self.zip = zipfile.ZipFile(self.fo)

File "/home/slesage/.pyenv/versions/3.9.6/lib/python3.9/zipfile.py", line 1257, in __init__

self._RealGetContents()

File "/home/slesage/.pyenv/versions/3.9.6/lib/python3.9/zipfile.py", line 1320, in _RealGetContents

endrec = _EndRecData(fp)

File "/home/slesage/.pyenv/versions/3.9.6/lib/python3.9/zipfile.py", line 263, in _EndRecData

fpin.seek(0, 2)

File "/home/slesage/hf/datasets-preview-backend/.venv/lib/python3.9/site-packages/fsspec/implementations/http.py", line 676, in seek

raise ValueError("Cannot seek streaming HTTP file")

ValueError: Cannot seek streaming HTTP file

```

## Environment info

- `datasets` version: 1.18.3

- Platform: Linux-5.11.0-1027-aws-x86_64-with-glibc2.31

- Python version: 3.9.6

- PyArrow version: 6.0.1

| {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3677/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3677/timeline | null | completed | false |

https://api.github.com/repos/huggingface/datasets/issues/3676 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3676/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3676/comments | https://api.github.com/repos/huggingface/datasets/issues/3676/events | https://github.com/huggingface/datasets/issues/3676 | 1,123,096,362 | I_kwDODunzps5C8Rcq | 3,676 | `None` replaced by `[]` after first batch in map | {

"login": "lhoestq",

"id": 42851186,

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/lhoestq",

"html_url": "https://github.com/lhoestq",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"starred_url": "https://api.github.com/users/lhoestq/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lhoestq/subscriptions",

"organizations_url": "https://api.github.com/users/lhoestq/orgs",

"repos_url": "https://api.github.com/users/lhoestq/repos",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"received_events_url": "https://api.github.com/users/lhoestq/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | {

"login": "lhoestq",

"id": 42851186,

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/lhoestq",

"html_url": "https://github.com/lhoestq",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"starred_url": "https://api.github.com/users/lhoestq/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lhoestq/subscriptions",

"organizations_url": "https://api.github.com/users/lhoestq/orgs",

"repos_url": "https://api.github.com/users/lhoestq/repos",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"received_events_url": "https://api.github.com/users/lhoestq/received_events",

"type": "User",

"site_admin": false

} | [

{

"login": "lhoestq",

"id": 42851186,

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/lhoestq",

"html_url": "https://github.com/lhoestq",

"followers_url": "https://api.git... | null | [

"It looks like this is because of this behavior in pyarrow:\r\n```python\r\nimport pyarrow as pa\r\n\r\narr = pa.array([None, [0]])\r\nreconstructed_arr = pa.ListArray.from_arrays(arr.offsets, arr.values)\r\nprint(reconstructed_arr.to_pylist())\r\n# [[], [0]]\r\n```\r\n\r\nIt seems that `arr.offsets` can reconstruc... | 1,643,895,408,000 | 1,666,962,800,000 | 1,666,962,800,000 | MEMBER | null | null | null | Sometimes `None` can be replaced by `[]` when running map:

```python

from datasets import Dataset

ds = Dataset.from_dict({"a": range(4)})

ds = ds.map(lambda x: {"b": [[None, [0]]]}, batched=True, batch_size=1, remove_columns=["a"])

print(ds.to_pandas())

# b

# 0 [None, [0]]

# 1 [[], [0]]

# 2 [[], [0]]

# 3 [[], [0]]

```

This issue has been experienced when running the `run_qa.py` example from `transformers` (see issue https://github.com/huggingface/transformers/issues/15401)

This can be due to a bug in when casting `None` in nested lists. Casting only happens after the first batch, since the first batch is used to infer the feature types.

cc @sgugger | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3676/reactions",

"total_count": 3,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 1,

"rocket": 0,

"eyes": 2

} | https://api.github.com/repos/huggingface/datasets/issues/3676/timeline | null | completed | false |

https://api.github.com/repos/huggingface/datasets/issues/3675 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3675/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3675/comments | https://api.github.com/repos/huggingface/datasets/issues/3675/events | https://github.com/huggingface/datasets/issues/3675 | 1,123,078,408 | I_kwDODunzps5C8NEI | 3,675 | Add CodeContests dataset | {

"login": "mariosasko",

"id": 47462742,

"node_id": "MDQ6VXNlcjQ3NDYyNzQy",

"avatar_url": "https://avatars.githubusercontent.com/u/47462742?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/mariosasko",

"html_url": "https://github.com/mariosasko",

"followers_url": "https://api.github.com/users/mariosasko/followers",

"following_url": "https://api.github.com/users/mariosasko/following{/other_user}",

"gists_url": "https://api.github.com/users/mariosasko/gists{/gist_id}",

"starred_url": "https://api.github.com/users/mariosasko/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/mariosasko/subscriptions",

"organizations_url": "https://api.github.com/users/mariosasko/orgs",

"repos_url": "https://api.github.com/users/mariosasko/repos",

"events_url": "https://api.github.com/users/mariosasko/events{/privacy}",

"received_events_url": "https://api.github.com/users/mariosasko/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 2067376369,

"node_id": "MDU6TGFiZWwyMDY3Mzc2MzY5",

"url": "https://api.github.com/repos/huggingface/datasets/labels/dataset%20request",

"name": "dataset request",

"color": "e99695",

"default": false,

"description": "Requesting to add a new dataset"

}

] | closed | false | null | [] | null | [

"@mariosasko Can I take this up?",

"This dataset is now available here: https://huggingface.co/datasets/deepmind/code_contests."

] | 1,643,894,400,000 | 1,658,315,225,000 | 1,658,315,225,000 | CONTRIBUTOR | null | null | null | ## Adding a Dataset

- **Name:** CodeContests

- **Description:** CodeContests is a competitive programming dataset for machine-learning.

- **Paper:**

- **Data:** https://github.com/deepmind/code_contests

- **Motivation:** This dataset was used when training [AlphaCode](https://deepmind.com/blog/article/Competitive-programming-with-AlphaCode).

Instructions to add a new dataset can be found [here](https://github.com/huggingface/datasets/blob/master/ADD_NEW_DATASET.md).

| {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3675/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3675/timeline | null | completed | false |

https://api.github.com/repos/huggingface/datasets/issues/3674 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3674/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3674/comments | https://api.github.com/repos/huggingface/datasets/issues/3674/events | https://github.com/huggingface/datasets/pull/3674 | 1,123,027,874 | PR_kwDODunzps4yBe17 | 3,674 | Add FrugalScore metric | {

"login": "moussaKam",

"id": 28675016,

"node_id": "MDQ6VXNlcjI4Njc1MDE2",

"avatar_url": "https://avatars.githubusercontent.com/u/28675016?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/moussaKam",

"html_url": "https://github.com/moussaKam",

"followers_url": "https://api.github.com/users/moussaKam/followers",

"following_url": "https://api.github.com/users/moussaKam/following{/other_user}",

"gists_url": "https://api.github.com/users/moussaKam/gists{/gist_id}",

"starred_url": "https://api.github.com/users/moussaKam/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/moussaKam/subscriptions",

"organizations_url": "https://api.github.com/users/moussaKam/orgs",

"repos_url": "https://api.github.com/users/moussaKam/repos",

"events_url": "https://api.github.com/users/moussaKam/events{/privacy}",

"received_events_url": "https://api.github.com/users/moussaKam/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [

"@lhoestq \r\n\r\nThe model used by default (`moussaKam/frugalscore_tiny_bert-base_bert-score`) is a tiny model.\r\n\r\nI still want to make one modification before merging.\r\nI would like to load the model checkpoint once. Do you think it's a good idea if I load it in `_download_and_prepare`? In this case should ... | 1,643,891,332,000 | 1,645,459,124,000 | 1,645,459,124,000 | CONTRIBUTOR | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3674",

"html_url": "https://github.com/huggingface/datasets/pull/3674",

"diff_url": "https://github.com/huggingface/datasets/pull/3674.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3674.patch",

"merged_at": 1645459124000

} | This pull request add FrugalScore metric for NLG systems evaluation.

FrugalScore is a reference-based metric for NLG models evaluation. It is based on a distillation approach that allows to learn a fixed, low cost version of any expensive NLG metric, while retaining most of its original performance.

Paper: https://arxiv.org/abs/2110.08559?context=cs

Github: https://github.com/moussaKam/FrugalScore

@lhoestq | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3674/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3674/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/3673 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3673/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3673/comments | https://api.github.com/repos/huggingface/datasets/issues/3673/events | https://github.com/huggingface/datasets/issues/3673 | 1,123,010,520 | I_kwDODunzps5C78fY | 3,673 | `load_dataset("snli")` is different from dataset viewer | {

"login": "pietrolesci",

"id": 61748653,

"node_id": "MDQ6VXNlcjYxNzQ4NjUz",

"avatar_url": "https://avatars.githubusercontent.com/u/61748653?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/pietrolesci",

"html_url": "https://github.com/pietrolesci",

"followers_url": "https://api.github.com/users/pietrolesci/followers",

"following_url": "https://api.github.com/users/pietrolesci/following{/other_user}",

"gists_url": "https://api.github.com/users/pietrolesci/gists{/gist_id}",

"starred_url": "https://api.github.com/users/pietrolesci/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/pietrolesci/subscriptions",

"organizations_url": "https://api.github.com/users/pietrolesci/orgs",

"repos_url": "https://api.github.com/users/pietrolesci/repos",

"events_url": "https://api.github.com/users/pietrolesci/events{/privacy}",

"received_events_url": "https://api.github.com/users/pietrolesci/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 1935892857,

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug",

"name": "bug",

"color": "d73a4a",

"default": true,

"description": "Something isn't working"

},

{

"id": 3470211881,

"node_id": "LA_kwDODunzps7O1zsp... | closed | false | {

"login": "severo",

"id": 1676121,

"node_id": "MDQ6VXNlcjE2NzYxMjE=",

"avatar_url": "https://avatars.githubusercontent.com/u/1676121?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/severo",

"html_url": "https://github.com/severo",

"followers_url": "https://api.github.com/users/severo/followers",

"following_url": "https://api.github.com/users/severo/following{/other_user}",

"gists_url": "https://api.github.com/users/severo/gists{/gist_id}",

"starred_url": "https://api.github.com/users/severo/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/severo/subscriptions",

"organizations_url": "https://api.github.com/users/severo/orgs",

"repos_url": "https://api.github.com/users/severo/repos",

"events_url": "https://api.github.com/users/severo/events{/privacy}",

"received_events_url": "https://api.github.com/users/severo/received_events",

"type": "User",

"site_admin": false

} | [

{

"login": "severo",

"id": 1676121,

"node_id": "MDQ6VXNlcjE2NzYxMjE=",

"avatar_url": "https://avatars.githubusercontent.com/u/1676121?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/severo",

"html_url": "https://github.com/severo",

"followers_url": "https://api.github.c... | null | [

"Yes, we decided to replace the encoded label with the corresponding label when possible in the dataset viewer. But\r\n1. maybe it's the wrong default\r\n2. we could find a way to show both (with a switch, or showing both ie. `0 (neutral)`).\r\n",

"Hi @severo,\r\n\r\nThanks for clarifying. \r\n\r\nI think this de... | 1,643,890,243,000 | 1,645,010,551,000 | 1,644,598,881,000 | NONE | null | null | null | ## Describe the bug

The dataset that is downloaded from the Hub via `load_dataset("snli")` is different from what is available in the dataset viewer. In the viewer the labels are not encoded (i.e., "neutral", "entailment", "contradiction"), while the downloaded dataset shows the encoded labels (i.e., 0, 1, 2).

Is this expected?

## Environment info

<!-- You can run the command `datasets-cli env` and copy-and-paste its output below. -->

- `datasets` version:

- Platform: Ubuntu 20.4

- Python version: 3.7

| {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3673/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3673/timeline | null | completed | false |

https://api.github.com/repos/huggingface/datasets/issues/3672 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3672/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3672/comments | https://api.github.com/repos/huggingface/datasets/issues/3672/events | https://github.com/huggingface/datasets/pull/3672 | 1,122,980,556 | PR_kwDODunzps4yBUrZ | 3,672 | Prioritize `module.builder_kwargs` over defaults in `TestCommand` | {

"login": "lvwerra",

"id": 8264887,

"node_id": "MDQ6VXNlcjgyNjQ4ODc=",

"avatar_url": "https://avatars.githubusercontent.com/u/8264887?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/lvwerra",

"html_url": "https://github.com/lvwerra",

"followers_url": "https://api.github.com/users/lvwerra/followers",

"following_url": "https://api.github.com/users/lvwerra/following{/other_user}",

"gists_url": "https://api.github.com/users/lvwerra/gists{/gist_id}",

"starred_url": "https://api.github.com/users/lvwerra/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lvwerra/subscriptions",

"organizations_url": "https://api.github.com/users/lvwerra/orgs",

"repos_url": "https://api.github.com/users/lvwerra/repos",

"events_url": "https://api.github.com/users/lvwerra/events{/privacy}",

"received_events_url": "https://api.github.com/users/lvwerra/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [] | 1,643,888,322,000 | 1,643,978,240,000 | 1,643,978,239,000 | MEMBER | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3672",

"html_url": "https://github.com/huggingface/datasets/pull/3672",

"diff_url": "https://github.com/huggingface/datasets/pull/3672.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3672.patch",

"merged_at": 1643978239000

} | This fixes a bug in the `TestCommand` where multiple kwargs for `name` were passed if it was set in both default and `module.builder_kwargs`. Example error:

```Python

Traceback (most recent call last):

File "create_metadata.py", line 96, in <module>

main(**vars(args))

File "create_metadata.py", line 86, in main

metadata_command.run()

File "/opt/conda/lib/python3.7/site-packages/datasets/commands/test.py", line 144, in run

for j, builder in enumerate(get_builders()):

File "/opt/conda/lib/python3.7/site-packages/datasets/commands/test.py", line 141, in get_builders

name=name, cache_dir=self._cache_dir, data_dir=self._data_dir, **module.builder_kwargs

TypeError: type object got multiple values for keyword argument 'name'

```

Let me know what you think. | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3672/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3672/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/3671 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3671/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3671/comments | https://api.github.com/repos/huggingface/datasets/issues/3671/events | https://github.com/huggingface/datasets/issues/3671 | 1,122,864,253 | I_kwDODunzps5C7Yx9 | 3,671 | Give an estimate of the dataset size in DatasetInfo | {

"login": "severo",

"id": 1676121,

"node_id": "MDQ6VXNlcjE2NzYxMjE=",

"avatar_url": "https://avatars.githubusercontent.com/u/1676121?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/severo",

"html_url": "https://github.com/severo",

"followers_url": "https://api.github.com/users/severo/followers",

"following_url": "https://api.github.com/users/severo/following{/other_user}",

"gists_url": "https://api.github.com/users/severo/gists{/gist_id}",

"starred_url": "https://api.github.com/users/severo/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/severo/subscriptions",

"organizations_url": "https://api.github.com/users/severo/orgs",

"repos_url": "https://api.github.com/users/severo/repos",

"events_url": "https://api.github.com/users/severo/events{/privacy}",

"received_events_url": "https://api.github.com/users/severo/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 1935892871,

"node_id": "MDU6TGFiZWwxOTM1ODkyODcx",

"url": "https://api.github.com/repos/huggingface/datasets/labels/enhancement",

"name": "enhancement",

"color": "a2eeef",

"default": true,

"description": "New feature or request"

}

] | open | false | null | [] | null | [] | 1,643,881,630,000 | 1,643,881,630,000 | null | CONTRIBUTOR | null | null | null | **Is your feature request related to a problem? Please describe.**

Currently, only part of the datasets provide `dataset_size`, `download_size`, `size_in_bytes` (and `num_bytes` and `num_examples` inside `splits`). I would want to get this information, or an estimation, for all the datasets.

**Describe the solution you'd like**

- get access to the git information for the dataset files hosted on the hub

- look at the [`Content-Length`](https://developer.mozilla.org/en-US/docs/Web/HTTP/Headers/Content-Length) for the files served by HTTP

| {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3671/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3671/timeline | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/3670 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3670/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3670/comments | https://api.github.com/repos/huggingface/datasets/issues/3670/events | https://github.com/huggingface/datasets/pull/3670 | 1,122,439,827 | PR_kwDODunzps4x_kBx | 3,670 | feat: 🎸 generate info if dataset_infos.json does not exist | {

"login": "severo",

"id": 1676121,

"node_id": "MDQ6VXNlcjE2NzYxMjE=",

"avatar_url": "https://avatars.githubusercontent.com/u/1676121?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/severo",

"html_url": "https://github.com/severo",

"followers_url": "https://api.github.com/users/severo/followers",

"following_url": "https://api.github.com/users/severo/following{/other_user}",

"gists_url": "https://api.github.com/users/severo/gists{/gist_id}",

"starred_url": "https://api.github.com/users/severo/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/severo/subscriptions",

"organizations_url": "https://api.github.com/users/severo/orgs",

"repos_url": "https://api.github.com/users/severo/repos",

"events_url": "https://api.github.com/users/severo/events{/privacy}",

"received_events_url": "https://api.github.com/users/severo/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [

"It's a first attempt at solving https://github.com/huggingface/datasets/issues/3013.",

"I only kept these ones:\r\n```\r\n path: str,\r\n data_files: Optional[Union[Dict, List, str]] = None,\r\n download_config: Optional[DownloadConfig] = None,\r\n download_mode: Optional[GenerateMode] = None,\r\n ... | 1,643,839,916,000 | 1,645,459,031,000 | 1,645,459,030,000 | CONTRIBUTOR | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3670",

"html_url": "https://github.com/huggingface/datasets/pull/3670",

"diff_url": "https://github.com/huggingface/datasets/pull/3670.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3670.patch",

"merged_at": 1645459030000

} | in get_dataset_infos(). Also: add the `use_auth_token` parameter, and create get_dataset_config_info()

✅ Closes: #3013 | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3670/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3670/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/3669 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3669/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3669/comments | https://api.github.com/repos/huggingface/datasets/issues/3669/events | https://github.com/huggingface/datasets/pull/3669 | 1,122,335,622 | PR_kwDODunzps4x_OTI | 3,669 | Common voice validated partition | {

"login": "shalymin-amzn",

"id": 98762373,

"node_id": "U_kgDOBeL-hQ",

"avatar_url": "https://avatars.githubusercontent.com/u/98762373?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/shalymin-amzn",

"html_url": "https://github.com/shalymin-amzn",

"followers_url": "https://api.github.com/users/shalymin-amzn/followers",

"following_url": "https://api.github.com/users/shalymin-amzn/following{/other_user}",

"gists_url": "https://api.github.com/users/shalymin-amzn/gists{/gist_id}",

"starred_url": "https://api.github.com/users/shalymin-amzn/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/shalymin-amzn/subscriptions",

"organizations_url": "https://api.github.com/users/shalymin-amzn/orgs",

"repos_url": "https://api.github.com/users/shalymin-amzn/repos",

"events_url": "https://api.github.com/users/shalymin-amzn/events{/privacy}",

"received_events_url": "https://api.github.com/users/shalymin-amzn/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [

"Hi @patrickvonplaten - could you please advise whether this would be a welcomed change, and if so, who I consult regarding the unit-tests?",

"I'd be happy with adding this change. @anton-l @lhoestq - what do you think?",

"Cool ! I just fixed the tests by adding a dummy `validated.tsv` file in the dummy data ar... | 1,643,832,283,000 | 1,644,341,212,000 | 1,644,340,992,000 | CONTRIBUTOR | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3669",

"html_url": "https://github.com/huggingface/datasets/pull/3669",

"diff_url": "https://github.com/huggingface/datasets/pull/3669.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3669.patch",

"merged_at": 1644340992000

} | This patch adds access to the 'validated' partitions of CommonVoice datasets (provided by the dataset creators but not available in the HuggingFace interface yet).

As 'validated' contains significantly more data than 'train' (although it contains both test and validation, so one needs to be careful there), it can be useful to train better models where no strict comparison with the previous work is intended. | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3669/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3669/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/3668 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3668/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3668/comments | https://api.github.com/repos/huggingface/datasets/issues/3668/events | https://github.com/huggingface/datasets/issues/3668 | 1,122,261,736 | I_kwDODunzps5C5Fro | 3,668 | Couldn't cast array of type string error with cast_column | {

"login": "R4ZZ3",

"id": 25264037,

"node_id": "MDQ6VXNlcjI1MjY0MDM3",

"avatar_url": "https://avatars.githubusercontent.com/u/25264037?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/R4ZZ3",

"html_url": "https://github.com/R4ZZ3",

"followers_url": "https://api.github.com/users/R4ZZ3/followers",

"following_url": "https://api.github.com/users/R4ZZ3/following{/other_user}",

"gists_url": "https://api.github.com/users/R4ZZ3/gists{/gist_id}",

"starred_url": "https://api.github.com/users/R4ZZ3/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/R4ZZ3/subscriptions",

"organizations_url": "https://api.github.com/users/R4ZZ3/orgs",

"repos_url": "https://api.github.com/users/R4ZZ3/repos",

"events_url": "https://api.github.com/users/R4ZZ3/events{/privacy}",

"received_events_url": "https://api.github.com/users/R4ZZ3/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 1935892857,

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug",

"name": "bug",

"color": "d73a4a",

"default": true,

"description": "Something isn't working"

}

] | closed | false | null | [] | null | [

"Hi ! I wasn't able to reproduce the error, are you still experiencing this ? I tried calling `cast_column` on a string column containing paths.\r\n\r\nIf you manage to share a reproducible code example that would be perfect",

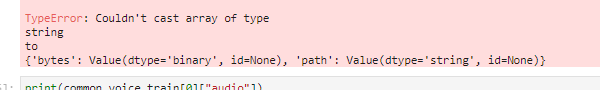

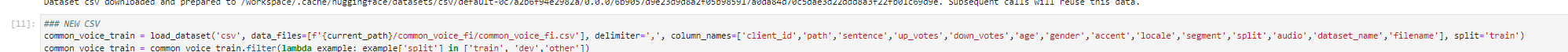

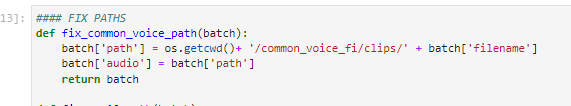

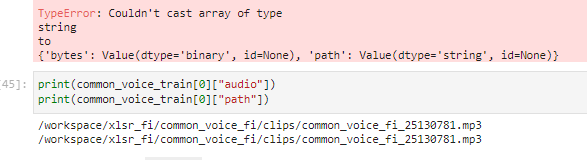

"Hi,\r\n\r\nI think my team mate got this solved. Clolsing it for now and will reopen i... | 1,643,826,809,000 | 1,658,237,784,000 | 1,658,237,784,000 | NONE | null | null | null | ## Describe the bug

In OVH cloud during Huggingface Robust-speech-recognition event on a AI training notebook instance using jupyter lab and running jupyter notebook When using the dataset.cast_column("audio",Audio(sampling_rate=16_000))

method I get error

This was working with datasets version 1.17.1.dev0

but now with version 1.18.3 produces the error above.

## Steps to reproduce the bug

load dataset:

remove columns:

run my fix_path function.

This also creates the audio column that is referring to the absolute file path of the audio

Then I concatenate few other datasets and finally try the cast_column method

but get error:

## Expected results

A clear and concise description of the expected results.

## Actual results

Specify the actual results or traceback.

## Environment info

<!-- You can run the command `datasets-cli env` and copy-and-paste its output below. -->

- `datasets` version: 1.18.3

- Platform:

OVH Cloud, AI Training section, container for Huggingface Robust Speech Recognition event image(baaastijn/ovh_huggingface)

- Python version: 3.8.8

- PyArrow version:

| {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3668/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3668/timeline | null | completed | false |

https://api.github.com/repos/huggingface/datasets/issues/3667 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3667/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3667/comments | https://api.github.com/repos/huggingface/datasets/issues/3667/events | https://github.com/huggingface/datasets/pull/3667 | 1,122,060,630 | PR_kwDODunzps4x-Ujt | 3,667 | Process .opus files with torchaudio | {

"login": "polinaeterna",

"id": 16348744,

"node_id": "MDQ6VXNlcjE2MzQ4NzQ0",

"avatar_url": "https://avatars.githubusercontent.com/u/16348744?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/polinaeterna",

"html_url": "https://github.com/polinaeterna",

"followers_url": "https://api.github.com/users/polinaeterna/followers",

"following_url": "https://api.github.com/users/polinaeterna/following{/other_user}",

"gists_url": "https://api.github.com/users/polinaeterna/gists{/gist_id}",

"starred_url": "https://api.github.com/users/polinaeterna/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/polinaeterna/subscriptions",

"organizations_url": "https://api.github.com/users/polinaeterna/orgs",

"repos_url": "https://api.github.com/users/polinaeterna/repos",

"events_url": "https://api.github.com/users/polinaeterna/events{/privacy}",

"received_events_url": "https://api.github.com/users/polinaeterna/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | {

"login": "polinaeterna",

"id": 16348744,

"node_id": "MDQ6VXNlcjE2MzQ4NzQ0",

"avatar_url": "https://avatars.githubusercontent.com/u/16348744?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/polinaeterna",

"html_url": "https://github.com/polinaeterna",

"followers_url": "https://api.github.com/users/polinaeterna/followers",

"following_url": "https://api.github.com/users/polinaeterna/following{/other_user}",

"gists_url": "https://api.github.com/users/polinaeterna/gists{/gist_id}",

"starred_url": "https://api.github.com/users/polinaeterna/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/polinaeterna/subscriptions",

"organizations_url": "https://api.github.com/users/polinaeterna/orgs",

"repos_url": "https://api.github.com/users/polinaeterna/repos",

"events_url": "https://api.github.com/users/polinaeterna/events{/privacy}",

"received_events_url": "https://api.github.com/users/polinaeterna/received_events",

"type": "User",

"site_admin": false

} | [

{

"login": "polinaeterna",

"id": 16348744,

"node_id": "MDQ6VXNlcjE2MzQ4NzQ0",

"avatar_url": "https://avatars.githubusercontent.com/u/16348744?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/polinaeterna",

"html_url": "https://github.com/polinaeterna",

"followers_url": "... | null | [

"Note that torchaudio is maybe less practical to use for TF or JAX users.\r\nThis is not in the scope of this PR, but in the future if we manage to find a way to let the user control the decoding it would be nice",

"> Note that torchaudio is maybe less practical to use for TF or JAX users. This is not in the scop... | 1,643,815,394,000 | 1,643,988,578,000 | 1,643,988,578,000 | CONTRIBUTOR | null | true | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3667",

"html_url": "https://github.com/huggingface/datasets/pull/3667",

"diff_url": "https://github.com/huggingface/datasets/pull/3667.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3667.patch",

"merged_at": null

} | @anton-l suggested to proccess .opus files with `torchaudio` instead of `soundfile` as it's faster:

(moreover, I didn't manage to load .opus files with `soundfile` / `librosa` locally on any my machine anyway for some reason, even with `ffmpeg` installed).

For now my current changes work with locally stored file:

```python

# download sample opus file (from MultilingualSpokenWords dataset)

!wget https://huggingface.co/datasets/polinaeterna/test_opus/resolve/main/common_voice_tt_17737010.opus

from datasets import Dataset, Audio

audio_path = "common_voice_tt_17737010.opus"

dataset = Dataset.from_dict({"audio": [audio_path]}).cast_column("audio", Audio(48000))

dataset[0]

# {'audio': {'path': 'common_voice_tt_17737010.opus',

# 'array': array([ 0.0000000e+00, 0.0000000e+00, 3.0517578e-05, ...,

# -6.1035156e-05, 6.1035156e-05, 0.0000000e+00], dtype=float32),

# 'sampling_rate': 48000}}

```

But it doesn't work when loading inside s dataset from bytes (I checked on [MultilingualSpokenWords](https://github.com/huggingface/datasets/pull/3666), the PR is a draft now, maybe the bug is somewhere there )

```python

import torchaudio

with open(audio_path, "rb") as b:

print(torchaudio.load(b))

# RuntimeError: Error loading audio file: failed to open file <in memory buffer>

``` | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3667/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3667/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/3666 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3666/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3666/comments | https://api.github.com/repos/huggingface/datasets/issues/3666/events | https://github.com/huggingface/datasets/pull/3666 | 1,122,058,894 | PR_kwDODunzps4x-ULz | 3,666 | process .opus files (for Multilingual Spoken Words) | {

"login": "polinaeterna",

"id": 16348744,

"node_id": "MDQ6VXNlcjE2MzQ4NzQ0",

"avatar_url": "https://avatars.githubusercontent.com/u/16348744?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/polinaeterna",

"html_url": "https://github.com/polinaeterna",

"followers_url": "https://api.github.com/users/polinaeterna/followers",

"following_url": "https://api.github.com/users/polinaeterna/following{/other_user}",

"gists_url": "https://api.github.com/users/polinaeterna/gists{/gist_id}",

"starred_url": "https://api.github.com/users/polinaeterna/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/polinaeterna/subscriptions",

"organizations_url": "https://api.github.com/users/polinaeterna/orgs",

"repos_url": "https://api.github.com/users/polinaeterna/repos",

"events_url": "https://api.github.com/users/polinaeterna/events{/privacy}",

"received_events_url": "https://api.github.com/users/polinaeterna/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [

"@lhoestq I still have problems with processing `.opus` files with `soundfile` so I actually cannot fully check that it works but it should... Maybe this should be investigated in case of someone else would also have problems with that.\r\n\r\nAlso, as the data is in a private repo on the hub (before we come to a ... | 1,643,815,308,000 | 1,645,524,243,000 | 1,645,524,233,000 | CONTRIBUTOR | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3666",

"html_url": "https://github.com/huggingface/datasets/pull/3666",

"diff_url": "https://github.com/huggingface/datasets/pull/3666.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3666.patch",

"merged_at": 1645524233000

} | Opus files requires `libsndfile>=1.0.30`. Add check for this version and tests.

**outdated:**

Add [Multillingual Spoken Words dataset](https://mlcommons.org/en/multilingual-spoken-words/)

You can specify multiple languages for downloading 😌:

```python

ds = load_dataset("datasets/ml_spoken_words", languages=["ar", "tt"])

```

1. I didn't take into account that each time you pass a set of languages the data for a specific language is downloaded even if it was downloaded before (since these are custom configs like `ar+tt` and `ar+tt+br`. Maybe that wasn't a good idea?

2. The script will have to be slightly changed after merge of https://github.com/huggingface/datasets/pull/3664

2. Just can't figure out what wrong with dummy files... 😞 Maybe we should get rid of them at some point 😁 | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3666/reactions",

"total_count": 1,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 1,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3666/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/3665 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3665/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3665/comments | https://api.github.com/repos/huggingface/datasets/issues/3665/events | https://github.com/huggingface/datasets/pull/3665 | 1,121,753,385 | PR_kwDODunzps4x9TnU | 3,665 | Fix MP3 resampling when a dataset's audio files have different sampling rates | {

"login": "lhoestq",

"id": 42851186,

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/lhoestq",

"html_url": "https://github.com/lhoestq",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"starred_url": "https://api.github.com/users/lhoestq/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lhoestq/subscriptions",

"organizations_url": "https://api.github.com/users/lhoestq/orgs",

"repos_url": "https://api.github.com/users/lhoestq/repos",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"received_events_url": "https://api.github.com/users/lhoestq/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [] | 1,643,797,905,000 | 1,643,799,146,000 | 1,643,799,146,000 | MEMBER | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3665",

"html_url": "https://github.com/huggingface/datasets/pull/3665",

"diff_url": "https://github.com/huggingface/datasets/pull/3665.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3665.patch",

"merged_at": 1643799145000

} | The resampler needs to be updated if the `orig_freq` doesn't match the audio file sampling rate

Fix https://github.com/huggingface/datasets/issues/3662 | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3665/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3665/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/3664 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3664/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3664/comments | https://api.github.com/repos/huggingface/datasets/issues/3664/events | https://github.com/huggingface/datasets/pull/3664 | 1,121,233,301 | PR_kwDODunzps4x7mg_ | 3,664 | [WIP] Return local paths to Common Voice | {

"login": "anton-l",

"id": 26864830,

"node_id": "MDQ6VXNlcjI2ODY0ODMw",

"avatar_url": "https://avatars.githubusercontent.com/u/26864830?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/anton-l",

"html_url": "https://github.com/anton-l",

"followers_url": "https://api.github.com/users/anton-l/followers",

"following_url": "https://api.github.com/users/anton-l/following{/other_user}",

"gists_url": "https://api.github.com/users/anton-l/gists{/gist_id}",

"starred_url": "https://api.github.com/users/anton-l/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/anton-l/subscriptions",

"organizations_url": "https://api.github.com/users/anton-l/orgs",

"repos_url": "https://api.github.com/users/anton-l/repos",

"events_url": "https://api.github.com/users/anton-l/events{/privacy}",

"received_events_url": "https://api.github.com/users/anton-l/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [

"Cool thanks for giving it a try @anton-l ! \r\n\r\nWould be very much in favor of having \"real\" paths to the audio files again for non-streaming use cases. At the same time it would be nice to make the audio data loading script as understandable as possible so that the community can easily add audio datasets in ... | 1,643,752,107,000 | 1,645,521,246,000 | 1,645,521,246,000 | MEMBER | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3664",

"html_url": "https://github.com/huggingface/datasets/pull/3664",

"diff_url": "https://github.com/huggingface/datasets/pull/3664.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3664.patch",

"merged_at": null

} | Fixes https://github.com/huggingface/datasets/issues/3663

This is a proposed way of returning the old local file-based generator while keeping the new streaming generator intact.

TODO:

- [ ] brainstorm a bit more on https://github.com/huggingface/datasets/issues/3663 to see if we can do better

- [ ] refactor the heck out of this PR to avoid completely copying the logic between the two generators | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3664/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3664/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/3663 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3663/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3663/comments | https://api.github.com/repos/huggingface/datasets/issues/3663/events | https://github.com/huggingface/datasets/issues/3663 | 1,121,067,647 | I_kwDODunzps5C0iJ_ | 3,663 | [Audio] Path of Common Voice cannot be used for audio loading anymore | {

"login": "patrickvonplaten",

"id": 23423619,

"node_id": "MDQ6VXNlcjIzNDIzNjE5",

"avatar_url": "https://avatars.githubusercontent.com/u/23423619?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/patrickvonplaten",

"html_url": "https://github.com/patrickvonplaten",

"followers_url": "https://api.github.com/users/patrickvonplaten/followers",

"following_url": "https://api.github.com/users/patrickvonplaten/following{/other_user}",

"gists_url": "https://api.github.com/users/patrickvonplaten/gists{/gist_id}",

"starred_url": "https://api.github.com/users/patrickvonplaten/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/patrickvonplaten/subscriptions",

"organizations_url": "https://api.github.com/users/patrickvonplaten/orgs",

"repos_url": "https://api.github.com/users/patrickvonplaten/repos",

"events_url": "https://api.github.com/users/patrickvonplaten/events{/privacy}",

"received_events_url": "https://api.github.com/users/patrickvonplaten/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 1935892857,

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug",

"name": "bug",

"color": "d73a4a",

"default": true,

"description": "Something isn't working"

}

] | closed | false | {

"login": "albertvillanova",

"id": 8515462,

"node_id": "MDQ6VXNlcjg1MTU0NjI=",

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/albertvillanova",

"html_url": "https://github.com/albertvillanova",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/other_user}",

"gists_url": "https://api.github.com/users/albertvillanova/gists{/gist_id}",

"starred_url": "https://api.github.com/users/albertvillanova/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/albertvillanova/subscriptions",

"organizations_url": "https://api.github.com/users/albertvillanova/orgs",

"repos_url": "https://api.github.com/users/albertvillanova/repos",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"received_events_url": "https://api.github.com/users/albertvillanova/received_events",

"type": "User",

"site_admin": false

} | [

{

"login": "albertvillanova",

"id": 8515462,

"node_id": "MDQ6VXNlcjg1MTU0NjI=",

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/albertvillanova",

"html_url": "https://github.com/albertvillanova",

"followers_... | null | [

"Having talked to @lhoestq, I see that this feature is no longer supported. \r\n\r\nI really don't think this was a good idea. It is a major breaking change and one for which we don't even have a working solution at the moment, which is bad for PyTorch as we don't want to force people to have `datasets` decode audi... | 1,643,740,810,000 | 1,663,772,589,000 | 1,663,772,182,000 | MEMBER | null | null | null | ## Describe the bug

## Steps to reproduce the bug

```python

from datasets import load_dataset

from torchaudio import load

ds = load_dataset("common_voice", "ab", split="train")

# both of the following commands fail at the moment

load(ds[0]["audio"]["path"])

load(ds[0]["path"])

```

## Expected results

The path should be the complete absolute path to the downloaded audio file not some relative path.

## Actual results

```bash

~/hugging_face/venv_3.9/lib/python3.9/site-packages/torchaudio/backend/sox_io_backend.py in load(filepath, frame_offset, num_frames, normalize, channels_first, format)

150 filepath, frame_offset, num_frames, normalize, channels_first, format)

151 filepath = os.fspath(filepath)

--> 152 return torch.ops.torchaudio.sox_io_load_audio_file(

153 filepath, frame_offset, num_frames, normalize, channels_first, format)

154

RuntimeError: Error loading audio file: failed to open file cv-corpus-6.1-2020-12-11/ab/clips/common_voice_ab_19904194.mp3

```

## Environment info

<!-- You can run the command `datasets-cli env` and copy-and-paste its output below. -->

- `datasets` version: 1.18.3.dev0

- Platform: Linux-5.4.0-96-generic-x86_64-with-glibc2.27

- Python version: 3.9.1

- PyArrow version: 3.0.0

| {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3663/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3663/timeline | null | completed | false |

https://api.github.com/repos/huggingface/datasets/issues/3662 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3662/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3662/comments | https://api.github.com/repos/huggingface/datasets/issues/3662/events | https://github.com/huggingface/datasets/issues/3662 | 1,121,024,403 | I_kwDODunzps5C0XmT | 3,662 | [Audio] MP3 resampling is incorrect when dataset's audio files have different sampling rates | {

"login": "lhoestq",

"id": 42851186,

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/lhoestq",

"html_url": "https://github.com/lhoestq",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"starred_url": "https://api.github.com/users/lhoestq/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lhoestq/subscriptions",

"organizations_url": "https://api.github.com/users/lhoestq/orgs",

"repos_url": "https://api.github.com/users/lhoestq/repos",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"received_events_url": "https://api.github.com/users/lhoestq/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [

"Thanks @lhoestq for finding the reason of incorrect resampling. This issue affects all languages which have sound files with different sampling rates such as Turkish and Luganda.",

"@cahya-wirawan - do you know how many languages have different sampling rates in Common Voice? I'm quite surprised to see this for ... | 1,643,738,104,000 | 1,643,799,145,000 | 1,643,799,145,000 | MEMBER | null | null | null | The Audio feature resampler for MP3 gets stuck with the first original frequencies it meets, which leads to subsequent decoding to be incorrect.

Here is a code to reproduce the issue:

Let's first consider two audio files with different sampling rates 32000 and 16000:

```python

# first download a mp3 file with sampling_rate=32000

!wget https://file-examples-com.github.io/uploads/2017/11/file_example_MP3_700KB.mp3

import torchaudio

audio_path = "file_example_MP3_700KB.mp3"

audio_path2 = audio_path.replace(".mp3", "_resampled.mp3")

resample = torchaudio.transforms.Resample(32000, 16000) # create a new file with sampling_rate=16000

torchaudio.save(audio_path2, resample(torchaudio.load(audio_path)[0]), 16000)

```

Then we can see an issue here when decoding:

```python

from datasets import Dataset, Audio

dataset = Dataset.from_dict({"audio": [audio_path, audio_path2]}).cast_column("audio", Audio(48000))

dataset[0] # decode the first audio file sets the resampler orig_freq to 32000

print(dataset .features["audio"]._resampler.orig_freq)

# 32000

print(dataset[0]["audio"]["array"].shape) # here decoding is fine

# (1308096,)

dataset = Dataset.from_dict({"audio": [audio_path, audio_path2]}).cast_column("audio", Audio(48000))

dataset[1] # decode the second audio file sets the resampler orig_freq to 16000

print(dataset .features["audio"]._resampler.orig_freq)

# 16000

print(dataset[0]["audio"]["array"].shape) # here decoding uses orig_freq=16000 instead of 32000

# (2616192,)

```

The value of `orig_freq` doesn't change no matter what file needs to be decoded

cc @patrickvonplaten @anton-l @cahya-wirawan @albertvillanova

The issue seems to be here in `Audio.decode_mp3`:

https://github.com/huggingface/datasets/blob/4c417d52def6e20359ca16c6723e0a2855e5c3fd/src/datasets/features/audio.py#L176-L180 | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3662/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3662/timeline | null | completed | false |

https://api.github.com/repos/huggingface/datasets/issues/3661 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3661/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3661/comments | https://api.github.com/repos/huggingface/datasets/issues/3661/events | https://github.com/huggingface/datasets/pull/3661 | 1,121,000,251 | PR_kwDODunzps4x61ad | 3,661 | Remove unnecessary 'r' arg in | {

"login": "bryant1410",

"id": 3905501,

"node_id": "MDQ6VXNlcjM5MDU1MDE=",

"avatar_url": "https://avatars.githubusercontent.com/u/3905501?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/bryant1410",

"html_url": "https://github.com/bryant1410",

"followers_url": "https://api.github.com/users/bryant1410/followers",

"following_url": "https://api.github.com/users/bryant1410/following{/other_user}",

"gists_url": "https://api.github.com/users/bryant1410/gists{/gist_id}",

"starred_url": "https://api.github.com/users/bryant1410/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/bryant1410/subscriptions",

"organizations_url": "https://api.github.com/users/bryant1410/orgs",

"repos_url": "https://api.github.com/users/bryant1410/repos",

"events_url": "https://api.github.com/users/bryant1410/events{/privacy}",

"received_events_url": "https://api.github.com/users/bryant1410/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [

"The CI failure is only because of the datasets is missing some sections in their cards - we can ignore that since it's unrelated to this PR"

] | 1,643,736,567,000 | 1,644,253,047,000 | 1,644,249,762,000 | CONTRIBUTOR | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3661",

"html_url": "https://github.com/huggingface/datasets/pull/3661",

"diff_url": "https://github.com/huggingface/datasets/pull/3661.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3661.patch",

"merged_at": 1644249762000

} | Originally from #3489 | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3661/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3661/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/3660 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3660/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3660/comments | https://api.github.com/repos/huggingface/datasets/issues/3660/events | https://github.com/huggingface/datasets/pull/3660 | 1,120,982,671 | PR_kwDODunzps4x6xr8 | 3,660 | Change HTTP links to HTTPS | {

"login": "bryant1410",

"id": 3905501,

"node_id": "MDQ6VXNlcjM5MDU1MDE=",

"avatar_url": "https://avatars.githubusercontent.com/u/3905501?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/bryant1410",

"html_url": "https://github.com/bryant1410",

"followers_url": "https://api.github.com/users/bryant1410/followers",

"following_url": "https://api.github.com/users/bryant1410/following{/other_user}",

"gists_url": "https://api.github.com/users/bryant1410/gists{/gist_id}",

"starred_url": "https://api.github.com/users/bryant1410/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/bryant1410/subscriptions",

"organizations_url": "https://api.github.com/users/bryant1410/orgs",

"repos_url": "https://api.github.com/users/bryant1410/repos",

"events_url": "https://api.github.com/users/bryant1410/events{/privacy}",

"received_events_url": "https://api.github.com/users/bryant1410/received_events",

"type": "User",

"site_admin": false

} | [] | open | false | null | [] | null | [] | 1,643,735,571,000 | 1,663,773,392,000 | null | CONTRIBUTOR | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3660",

"html_url": "https://github.com/huggingface/datasets/pull/3660",

"diff_url": "https://github.com/huggingface/datasets/pull/3660.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/3660.patch",

"merged_at": null

} | I tested the links. I also fixed some typos.

Originally from #3489 | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3660/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3660/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/3659 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3659/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3659/comments | https://api.github.com/repos/huggingface/datasets/issues/3659/events | https://github.com/huggingface/datasets/issues/3659 | 1,120,913,672 | I_kwDODunzps5Cz8kI | 3,659 | push_to_hub but preview not working | {

"login": "thomas-happify",

"id": 66082334,

"node_id": "MDQ6VXNlcjY2MDgyMzM0",

"avatar_url": "https://avatars.githubusercontent.com/u/66082334?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/thomas-happify",

"html_url": "https://github.com/thomas-happify",

"followers_url": "https://api.github.com/users/thomas-happify/followers",

"following_url": "https://api.github.com/users/thomas-happify/following{/other_user}",

"gists_url": "https://api.github.com/users/thomas-happify/gists{/gist_id}",

"starred_url": "https://api.github.com/users/thomas-happify/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/thomas-happify/subscriptions",

"organizations_url": "https://api.github.com/users/thomas-happify/orgs",

"repos_url": "https://api.github.com/users/thomas-happify/repos",

"events_url": "https://api.github.com/users/thomas-happify/events{/privacy}",

"received_events_url": "https://api.github.com/users/thomas-happify/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 3470211881,

"node_id": "LA_kwDODunzps7O1zsp",

"url": "https://api.github.com/repos/huggingface/datasets/labels/dataset-viewer",

"name": "dataset-viewer",

"color": "E5583E",

"default": false,

"description": "Related to the dataset viewer on huggingface.co"

}

] | closed | false | {

"login": "albertvillanova",

"id": 8515462,

"node_id": "MDQ6VXNlcjg1MTU0NjI=",

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/albertvillanova",

"html_url": "https://github.com/albertvillanova",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/other_user}",

"gists_url": "https://api.github.com/users/albertvillanova/gists{/gist_id}",

"starred_url": "https://api.github.com/users/albertvillanova/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/albertvillanova/subscriptions",

"organizations_url": "https://api.github.com/users/albertvillanova/orgs",

"repos_url": "https://api.github.com/users/albertvillanova/repos",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"received_events_url": "https://api.github.com/users/albertvillanova/received_events",

"type": "User",

"site_admin": false

} | [

{

"login": "albertvillanova",

"id": 8515462,

"node_id": "MDQ6VXNlcjg1MTU0NjI=",

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/albertvillanova",

"html_url": "https://github.com/albertvillanova",

"followers_... | null | [

"Hi @thomas-happify, please note that the preview may take some time before rendering the data.\r\n\r\nI've seen it is already working.\r\n\r\nI close this issue. Please feel free to reopen it if the problem arises again."

] | 1,643,732,637,000 | 1,644,393,637,000 | 1,644,393,637,000 | NONE | null | null | null | ## Dataset viewer issue for '*happifyhealth/twitter_pnn*'

**Link:** *[link to the dataset viewer page](https://huggingface.co/datasets/happifyhealth/twitter_pnn)*

I used

```

dataset.push_to_hub("happifyhealth/twitter_pnn")

```

but the preview is not working.

Am I the one who added this dataset ? Yes

| {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3659/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3659/timeline | null | completed | false |

https://api.github.com/repos/huggingface/datasets/issues/3658 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3658/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3658/comments | https://api.github.com/repos/huggingface/datasets/issues/3658/events | https://github.com/huggingface/datasets/issues/3658 | 1,120,880,395 | I_kwDODunzps5Cz0cL | 3,658 | Dataset viewer issue for *P3* | {

"login": "jeffistyping",

"id": 22351555,

"node_id": "MDQ6VXNlcjIyMzUxNTU1",

"avatar_url": "https://avatars.githubusercontent.com/u/22351555?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/jeffistyping",

"html_url": "https://github.com/jeffistyping",

"followers_url": "https://api.github.com/users/jeffistyping/followers",

"following_url": "https://api.github.com/users/jeffistyping/following{/other_user}",

"gists_url": "https://api.github.com/users/jeffistyping/gists{/gist_id}",

"starred_url": "https://api.github.com/users/jeffistyping/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/jeffistyping/subscriptions",

"organizations_url": "https://api.github.com/users/jeffistyping/orgs",

"repos_url": "https://api.github.com/users/jeffistyping/repos",

"events_url": "https://api.github.com/users/jeffistyping/events{/privacy}",

"received_events_url": "https://api.github.com/users/jeffistyping/received_events",

"type": "User",

"site_admin": false

} | [] | open | false | null | [] | null | [

"The error is now:\r\n\r\n```\r\nStatus code: 400\r\nException: Status400Error\r\nMessage: this dataset is not supported for now.\r\n```\r\n\r\nWe've disabled the dataset viewer for several big datasets like this one. We hope being able to reenable it soon.",

"The list of splits cannot be obtained. cc... | 1,643,731,076,000 | 1,662,625,108,000 | null | NONE | null | null | null | ## Dataset viewer issue for '*P3*'

**Link: https://huggingface.co/datasets/bigscience/P3**

```

Status code: 400

Exception: SplitsNotFoundError

Message: The split names could not be parsed from the dataset config.

```

Am I the one who added this dataset ? No

| {

"url": "https://api.github.com/repos/huggingface/datasets/issues/3658/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/3658/timeline | null | null | false |